Bits and Pieces

-

"The Paranoid Style in American Politics, Richard Hofstadter, Harper's 1964

Two books which appeared in 1835 described the new danger to the American way of life and may be taken as expressions of the anti-Catholic mentality. One, Foreign Conspiracies against the Liberties of the United States, was from the hand of the celebrated painter and inventor of the telegraph, S.F.B. Morse. "A conspiracy exists," Morse proclaimed , and "its plans are already in operation . . . we are attacked in a vulnerable quarter which cannot be defended by our ships, our forts, or our armies." The main source of the conspiracy Morse found in Metternich's government: "Austria is now acting in this country. She has devised a grand scheme. She has organized a great plan for doing something here. . . . She has her Jesuit missionaries traveling through the land; she has supplied them with money, and has furnished a fountain for a regular supply." Were the plot successful, Morse said, some scion of the House of Hapsburg would soon be installed as Emperor of the United States.

Events since 1939 have given the contemporary right-wing paranoid a vast theatre for his imagination, full of rich and proliferating detail, replete with realistic cues and undeniable proofs of the validity of his suspicions. The theatre of action is now the entire world, and he can draw not only on the events of World War II, but also on those of the Korean War and the Cold War. Any historian of warfare knows it is in good part a comedy of errors and a museum of incompetence; but if for every error and every act of incompetence one can substitute an act of treason, many points of fascinating interpretation are open to the paranoid imagination. In the end, the real mystery, for one who reads the primary works of paranoid scholarship, is not how the United States has been brought to its present dangerous position but how it has managed to survive at all.

The higher paranoid scholarship is nothing if not coherent--in fact the paranoid mind is far more coherent than the real world.

They see only the consequences of power--and this through distorting lenses--and have no chance to observe its actual machinery. A distinguished historian has said that one of the most valuable things about history is that it teaches us how things do not happen. It is precisely this kind of awareness that the paranoid fails to develop.

-

"Ahh, the quintessential village pub," [via]

- "When the Revolution Came for Amy Cuddy," NYT Magazine

- "Shackles and Dollars," Chronicle of Higher Education

And, especially, the work of cotton picking itself. As Baptist dug into his sources, he realized that antebellum cotton production had not just expanded. It had transformed. The amount that each slave picked per day more than tripled between 1805 and 1860. The reason, he argues, comes down largely to one word: torture. Baptist describes how planters assigned picking quotas to slaves, whipped them if they failed to meet the targets, and steadily increased picking expectations for workers. Slaves avoided the lash by learning to pick faster. To this metaphorical "whipping machine," Baptist attributes world-historical consequences. "Systematized torture" in "slave labor camps," he writes, was "crucial" to creating the cotton-fueled wealth of the Industrial Revolution.

-

"Why Are More American Teenagers Than Ever Suffering From Severe Anxiety?," New York Times Magazine

When I asked Eken about other common sources of worry among highly anxious kids, she didn't hesitate: social media. Anxious teenagers from all backgrounds are relentlessly comparing themselves with their peers, she said, and the results are almost uniformly distressing.

Anxious kids certainly existed before Instagram, but many of the parents I spoke to worried that their kids' digital habits--round-the-clock responding to texts, posting to social media, obsessively following the filtered exploits of peers--were partly to blame for their children's struggles. To my surprise, anxious teenagers tended to agree. At Mountain Valley, I listened as a college student went on a philosophical rant about his generation's relationship to social media. "I don't think we realize how much it's affecting our moods and personalities," he said. "Social media is a tool, but it's become this thing that we can't live without but that's making us crazy."

While smartphones can provoke anxiety, they can also serve as a handy avoidance strategy. At the height of his struggles, Jake spent hours at a time on his phone at home or at school. "It was a way for me not to think about classes and college, not to have to talk to people," he said. Jake's parents became so alarmed that they spoke to his psychiatrist about it and took his phone away a few hours each night.

At a workshop for parents last fall at the NW Anxiety Institute in Portland, Ore., Kevin Ashworth, the clinical director, warned them of the "illusion of control and certainty" that smartphones offer anxious young people desperate to manage their environments. "Teens will go places if they feel like they know everything that will happen, if they know everyone who will be there, if they can see who's checked in online," Ashworth told the parents. "But life doesn't always come with that kind of certainty, and they're never practicing the skill of rolling with the punches, of walking into an unknown or awkward social situation and learning that they can survive it."

-

"What Facebook Did to American Democracy," Alexis Madrigal, The Atlantic:

By late October, the role that Facebook might be playing in the Trump campaign--and more broadly--was emerging. Joshua Green and Issenberg reported a long feature on the data operation then in motion. The Trump campaign was working to suppress "idealistic white liberals, young women, and African Americans," and they'd be doing it with targeted, "dark" Facebook ads. These ads are only visible to the buyer, the ad recipients, and Facebook. No one who hasn't been targeted by then can see them. How was anyone supposed to know what was going on, when the key campaign terrain was literally invisible to outside observers?

-

"Have Smartphones Destroyed a Generation?," The Atlantic:

You might expect that teens spend so much time in these new spaces because it makes them happy, but most data suggest that it does not. The Monitoring the Future survey, funded by the National Institute on Drug Abuse and designed to be nationally representative, has asked 12th-graders more than 1,000 questions every year since 1975 and queried eighth- and 10th-graders since 1991. The survey asks teens how happy they are and also how much of their leisure time they spend on various activities, including nonscreen activities such as in-person social interaction and exercise, and, in recent years, screen activities such as using social media, texting, and browsing the web. The results could not be clearer: Teens who spend more time than average on screen activities are more likely to be unhappy, and those who spend more time than average on nonscreen activities are more likely to be happy.

-

"'Our minds can be hijacked': the tech insiders who fear a smartphone dystopia," The Guardian

But [Eyal] was defensive of the techniques he teaches, and dismissive of those who compare tech addiction to drugs. "We're not freebasing Facebook and injecting Instagram here," he said. He flashed up a slide of a shelf filled with sugary baked goods. "Just as we shouldn't blame the baker for making such delicious treats, we can't blame tech makers for making their products so good we want to use them," he said. "Of course that's what tech companies will do. And frankly: do we want it any other way?"

Without irony, Eyal finished his talk with some personal tips for resisting the lure of technology. He told his audience he uses a Chrome extension, called DF YouTube, "which scrubs out a lot of those external triggers" he writes about in his book, and recommended an app called Pocket Points that "rewards you for staying off your phone when you need to focus."

Finally, Eyal confided the lengths he goes to protect his own family. He has installed in his house an outlet timer connected to a router that cuts off access to the internet at a set time every day. "The idea is to remember that we are not powerless," he said. "We are in control."

Roger McNamee, a venture capitalist who benefited from hugely profitable investments in Google and Facebook, has grown disenchanted with both companies, arguing that their early missions have been distorted by the fortunes they have been able to earn through advertising.

...McNamee chooses his words carefully. "The people who run Facebook and Google are good people, whose well-intentioned strategies have led to horrific unintended consequences," he says. "The problem is that there is nothing the companies can do to address the harm unless they abandon their current advertising models."

James Williams does not believe talk of dystopia is far-fetched. The ex-Google strategist who built the metrics system for the company's global search advertising business, he has had a front-row view of an industry he describes as the "largest, most standardised and most centralised form of attentional control in human history."

Williams, 35, left Google last year, and is on the cusp of completing a PhD at Oxford University exploring the ethics of persuasive design. It is a journey that has led him to question whether democracy can survive the new technological age.

He says his epiphany came a few years ago, when he noticed he was surrounded by technology that was inhibiting him from concentrating on the things he wanted to focus on. "It was that kind of individual, existential realisation: what's going on?" he says. "Isn't technology supposed to be doing the complete opposite of this?"

-

Justice Stevens' dissent on D.C. v. Heller, on the interpretation of the second amendment:

Until today, it has been understood that legislatures may regulate the civilian use and misuse of firearms so long as they do not interfere with the preservation of a well-regulated militia. The Court's announcement of a new constitutional right to own and use firearms for private purposes upsets that settled understanding, but leaves for future cases the formidable task of defining the scope of permissible regulations. Today judicial craftsmen have confidently asserted that a policy choice that denies a "law-abiding, responsible citize[n]" the right to keep and use weapons in the home for self-defense is "off the table." Ante, at 64. Given the presumption that most citizens are law abiding, and the reality that the need to defend oneself may suddenly arise in a host of locations outside the home, I fear that the District's policy choice may well be just the first of an unknown number of dominoes to be knocked off the table.

-

"Taking on the NRA," John Cassidy, New Yorker:

The N.R.A.'s ability to mobilize is a classic example of what the advertising guru David Ogilvy called the power of one "big idea." Beginning in the nineteen-seventies, the N.R.A. relentlessly promoted the view that the right to own a gun is sacrosanct. Playing on fear of rising crime rates and distrust of government, it transformed the terms of the debate. As Ladd Everitt, of the Coalition to Stop Gun Violence, told me, "Gun-control people were rattling off public-health statistics to make their case, while the N.R.A. was connecting gun rights to core American values like individualism and personal liberty." The success of this strategy explains things that otherwise look anomalous, such as the refusal to be conciliatory even after killings that you'd think would be P.R. disasters. After the massacre of schoolchildren in Newtown, Connecticut, the N.R.A.'s C.E.O. sent a series of e-mails to his members warning them that anti-gun forces were going to use it to "ban your guns" and "destroy the Second Amendment."

-

"Battleground America," Jill Lepore, New Yorker, April 23, 2012:

In 1986, the N.R.A.'s interpretation of the Second Amendment achieved new legal authority with the passage of the Firearms Owners Protection Act, which repealed parts of the 1968 Gun Control Act by invoking “the rights of citizens . . . to keep and bear arms under the Second Amendment." This interpretation was supported by a growing body of scholarship, much of it funded by the N.R.A. According to the constitutional-law scholar Carl Bogus, at least sixteen of the twenty-seven law-review articles published between 1970 and 1989 that were favorable to the N.R.A.'s interpretation of the Second Amendment were "written by lawyers who had been directly employed by or represented the N.R.A. or other gun-rights organizations." In an interview, former Chief Justice Warren Burger said that the new interpretation of the Second Amendment was "one of the greatest pieces of fraud, I repeat the word 'fraud,' on the American public by special-interest groups that I have ever seen in my lifetime."

- Outstanding Reference Sources - Previous Winners

- A full run of Hunt's Merchants Magazine, 1839-1870 in PDF

- Fully searchable Enron email archive

- John Lauritz Larson, Internal Improvement: National Public Works and the Promise of Popular Government in the Early United States

- David M Henkin, The Postal Age

- Daniel Wickberg, The Senses of Humor: Self and Laughter in Modern America

- Kurt Vonnegut, Player Piano

- Gail Bederman, Manliness and Civilization

- Myth, Rumor, and History: The Yankee Whittling Boy as Hero and Villain"[link]

- "Intellectual Property Law and History" [link]

- Adult New York Times Best Seller Lists for 1950 [link]

- JFK, Arts in America [link]

- Technology and Culture: Special Issue: Patents and Invention [link]

- 1958 Outstanding Books on Industrial Relations [link]

- Satisfactions in the white-collar job [link]

- The man on the assembly line, by Charles R. Walker and Robert H. Guest. [link]

- Power Structure Research and the Hope for Democracy [link]

- Some Problems in the Study of Organizational Culture [link]

Books

History stuff

-

Political cartoon showing man in military uniform, with epaulets and plumed hat, holding sword and seated on pile of skulls. A scathing attack on Whig principles, as embodied in their selection of a presidential candidate for 1848. Here the 'available candidate' is either Gen. Zachary Taylor or Winfield Scott, both of whom were contenders for the nomination before the June convention. The figure sits atop a pyramid of skulls, holding a blood-stained sword. The skulls and sword allude to the bloody but successful Mexican War campaigns waged by both Taylor and Scott, which earned them considerable popularity (a combination of attractiveness and credibility termed 'availability') among Whigs. The figure here has traditionally been identified as Taylor, but the flamboyant, plumed military hat and uniform are more in keeping with contemporary representations of Scott. The print may have appeared during the ground swell of popular support which arose for Scott as a rival to Zachary Taylor in the few months preceding the party's convention in Philadelphia on June 7, 1848. On June 9 Zachary Taylor captured the Whig nomination.

http://scienceblogs.com/goodmath/2007/07/14/a-laughable-laffer-curve-from/

-

Richard Van Atta on DARPA, Project Agile and William Godel:

Both authors spend the bulk of their books on Project Agile, a highly classified counterinsurgency program deployed in Vietnam and, eventually, other countries in Southeast Asia. The program began in 1961 under William Godel, deputy director of DARPA, whose role in the early years is little understood and not well-documented in the agency's archives. (However, much of the history of Godel and Agile was presented in detail in The Advanced Research Projects Agency, 1958-1974, by Richard J. Barber Associates, published in 1975.)

Each of these books presents compelling information about Godel's activities, indicating a program run amok and with little oversight. Out of Project Agile came such debacles as the "strategic hamlets" program of rural pacification; fallacious assessments of the Vietnamese people under the guise of social science; and the egregious use of chemical defoliants, particularly the infamous Agent Orange. In retrospect, much of Agile was naive, poorly managed, and rife with amateurism and even corruption. As Weinberger puts it, Godel "was running the Agile office as his own covert operations shop."

As a fascinating and excruciating example of errant public policy, these deep dives into the Godel episode are astounding. They portray an overzealous, misguided operator who hijacked a technological agency to perpetrate an outlandish and failed program of social engineering at a massive scale. The episode illuminates the fact that there existed at least two DARPAs with little in common: the "strategic" DARPA, pursuing missile defense and nuclear test detection; and the "operational" DARPA, in which programs such as Agile attempted to bring technology into a combat zone. The latter was hardly scientific and raised, as Weinberger states, a battle over competing visions over the agency's future. Neither book draws out the relevant lessons, however.

-

David Auerbach reviewing Kline's The Cybernetic Moment in Issues in Science and Technology:

In engaging the squishy realm of popular and intellectual social science in the latter half of the book, Kline steps into territory where it becomes increasingly uncertain whether the experts debating "information technology," such as management theorist Peter Drucker or sociologist Daniel Bell, had much knowledge of either information or technology.

-

-

From the authors' summary of "Association of Facebook Use With Compromised Well-Being: A Longitudinal Study":

Overall, our results showed that, while real-world social networks were positively associated with overall well-being, the use of Facebook was negatively associated with overall well-being. These results were particularly strong for mental health; most measures of Facebook use in one year predicted a decrease in mental health in a later year. We found consistently that both liking others' content and clicking links significantly predicted a subsequent reduction in self-reported physical health, mental health, and life satisfaction.

-

Mat Honan on Apple's media strategy:

No other person or entity, no politician or even Hollywood franchise is so able to so fully peel away the layers of our daily reality in service to engineered desire. This is Apple's specialty. Its entire purpose is to make you pay attention to it; to make you want it. And it is very, very good at that. This was so fully on display Tuesday that it's worth examining and understanding.

-

John McPhee on non-fiction narrative structure:

Inspired by the preponderance of natural cycles in the Arctic, McPhee shapes a story about Alaska around a circle. The first half of the arc will take place linearly, progressing from the beginning in the straightforward humanly experienced direction of time. Halfway through, the narrative flashes back to an earlier point, which we follow to the end, which is also the beginning. McPhee's concern is less a desire to ape the movement of the moon, and more that the trip's most dramatic event (a grizzly bear encounter) occurs earlier than it would ideally, which is "about three-fifths of the way along, a natural place for a high moment in any dramatic structure." McPhee makes even the limited power of narrative sound awesome: "You're a nonfiction writer. You can't move that bear around like a king's pawn or a queen's bishop. But you can, to an important and effective extent, arrange a structure that is completely faithful to fact." You can't move bears, but you can move time, and that's just as good.

Some McPhee pieces to look at some day:- "Looking for a Ship"

- "A Fleet of One"

- "Oranges

- And his new book, Draft No. 4: On the Writing Process

-

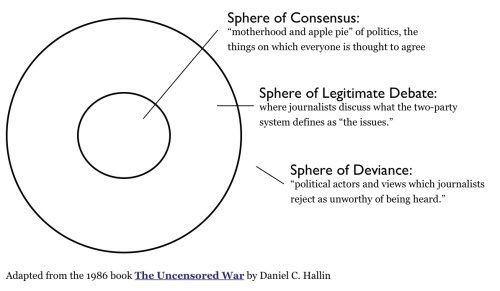

On Hallin's Spheres and journalistic consensus:

One thing to note is that this isn't at all a mechanism to determine where truth lies, or to assign moral weight to any argument.

It's a concept designed to help US, the public, understand the way that journalism historically communicates to us.

Something you may notice right away is that journalism, when it makes (largely unconscious) choices about what belongs in which sphere, it-

-uses the language of the status quo, of hegemony, neither of which are fixed, absolute points.

The discussion I think we're having, in a larger sense, is about what "consensus" means at this current, unique-feeling point in history.

-

- Joe Edelman on "What's Next":

My projects ran into an interesting and thorny problem. A few designers who watched my talks, attended workshops I led with Tristan, etc, "got it". They could see which product and metrics changes were necessary. Many of these teams even shipped changes as a result. But for the majority--including many talented and brilliant designers--they found our ideas exciting, but somehow intangible. Our concepts couldn't find a place in their working minds or their team discussions.

After many conversations, I traced the problem to a deep divide in how designers and engineers think about users: most imagine their users as defined by their immediate goals or by their apparent preferences. If their product helps the user achieve a goal (e.g., "responding to an email") or satisfies an apparent preference (such as "seeing photos of my friends") then--case closed--the product must be helping the user.

But perhaps 5% of designers and engineers have a different view--they sense that users have interests or aims which go beyond their immediate goals or apparent preferences. Thus a product which helps with goals, or which satisfies preferences, could nonetheless be a waste of a user's time. And a user could--despite responding to many emails and seeing many photos--eventually regret using such a product, because the product derailed a deeper concern the user has.

To bring the design and engineering community along with me, I would need to tell the 95%--oriented around immediate goals and preferences--what they were missing. But what exactly are they missing? What is more important to the user than her immediate goals and preferences?

This question--it turns out--is dear to philosophers concerned with choice and agency. Economist and philosopher Amartya Sen won a Nobel Prize for pointing out how people's interests cannot be defined solely by their present goals or preferences.

So there's our answer: What is even more important to a person than their current goals or preferences? The process of refining, discovering, and clarifying those goals and preferences.

I believe this idea amounts to a new and transformative view of human nature. If enough people start to see themselves in this way--as participants in a process that updates their goals and preferences--it will transform society.

Society moves forward when the design principles for human systems change. When a new principle (like "freedom" or "fairness" or "meritocracy" or "structural oppression") appears and becomes widely recognized, people redesign everything (schools, local businesses, etc) in accordance with it.

Today, many principles vie for this status: being "distributed", "peer to peer", getting "skin in the game", having a "flat hierarchy", being "commons-based", "sustainable", "zero-knowledge" and so on. But none of these have what it takes to transform society.

I conducted a study of which principles worked in the past. Those which transformed society were deeply connected to our human experience, led to new forms of intimacy between human beings, and ignited the public imagination with clear visions of change.

The process-oriented view meets these criteria. What society would help people determine their most meaningful goals and preferences? This question sparks the imagination. And the related design principles--exposure to the consequences of one's actions, discretion over the manner in which one works, and fostering capacity to handle hard truths together--suggest immediate changes to institutions and new forms of intimacy.

-

-

"What We Talk About When We Talk About and Exactly Like Trump," Teddy Wayne, NYT:

Mr. Trump has lodged his pronouncements in our collective memory like no other public figure in recent history. So quotable is his idiosyncratic communication, and so powerful are the tools with which he conveys it, that he has even altered how the rest of us regard and use certain parts of the language. Many people are saying--to borrow another of his idioms--the words of Mr. Trump, whether or not we like him.

-

On Dr. William Shockley, the founder of Silicon Valley:

Why the ambivalence? Shockley was a founding father of Silicon Valley--a Nobel Prize-winning physicist who somewhat literally put Mountain View on the map. The city was so unknown when he set up shop there in 1956 that he had to describe it to friends as the "southern edge of Palo Alto." But Shockley left a distressing legacy, and he's a complicated historical figure to remember with a monument--particularly in California, where people prefer to imagine the future rather than reckon with the past. ("In California," Joan Didion once put it, "we did not believe that history could bloody the land, or even touch it.") In the last two and a half decades of his life, Shockley turned away from technology and began promoting the idea that intelligence was biologically determined--with blacks cognitively subordinate to whites--arguing under the auspices of estimable science that without forced sterilization of those with inferior intelligence, the world would be plunged into a dysgenic panic. He claimed to his death in 1989 that he was acting in the service of mankind, according to statistical principles, and was constrained only by the limits of intellectual decorum. That is, he might have said, by a "politically correct monoculture that maintains its hold by shaming dissenters into silence."

Haunting these historical treatments--as well as Damore's memo--is Shockley's broader intellectual legacy, which lurks on, insidiously, in the contours of Silicon Valley. It rests on Shockley's rigid conviction in the rightness of his own views, in the pure empiricism of what he saw as the cold facts, in an understanding of ethics as derived from data sets and allowed to be deaf to any outside concern. Shockley was certain not only that he alone was correct but that society was simply too close-minded and moralistic to listen. He kept in his files a letter from a friend that compared criticism of his research into race and intelligence to the persecution Galileo Galilei experienced under the Inquisition. It's that sense that lingers on in the scene: that one's arguments are a truth to be set free by the hard-fought march of scientific progress, that the only thing clouding the better judgment of those who might understand is a mess of arbitrary social restrictions as oppressive as a medieval inquisition.

This set includes not only other Google employees who agreed with Damore on internal listservs; it also comprises the outside engineers who chorused on Reddit and 4chan that his case testified to the need of anti-diversity causes like Gamergate. And the echo reached powerful venture capitalists and respected tech entrepreneurs who found themselves caught between a corporate impulse to accede to public outcry and an iconoclastic desire, powerful in their industry, to say and do what no one else would dare. But this desire to cast aside consensus views--to see individual thinking as always superior to social understanding or collective goals--permits those possessed of sufficient arrogance and faith in bad methodology to demean the validity and function of moral values in the service of an amorality being redefined as some purer truth. It brings to mind something the philosopher Hans Jonas once wrote about the search for ethics in an age of technology: "We need wisdom most when we believe in it the least."

-

Heller on Ernest Elmo Calkins, the ad man who brought Modernism to America:

Later in a 1933 article "The Dividends of Beauty," featured in Advertising Arts, Calkins summarized the development this way: "Modernism offered the opportunity of expressing the inexpressible, of suggesting not so much a motor car as speed, not so much a gown as style, not so much a compact as beauty." If this had a similar ring to one of F.T. Marinetti’s Futurist manifestoes, it was because Calkins studied and borrowed heavily from the European avant-garde.

In Calkins' view, advertising was the fuel running the engines of industry. "The styling of manufactured goods," he wrote in "Advertising Art in the United States..." (Modern Publicity), "is a byproduct of improved advertising design...The technical term for this idea is obsoletism. We no longer wait for things to wear out. We displace them with others that are not more efficient but more attractive." Although clothing manufacturers had for decades been changing styles annually to increase consumer interest, industry had not adopted this strategy until Calkins made it his mission. "It seemed reasonable that if design and color made advertising more acceptable to the buying public," he wrote, "design and color would add salability of goods." He also noted that the majority of consumers were women, who indeed were accustomed to changes in fashions and were happily seduced by the sleek new designs.

-

Massimo Vignelli, via

More on the history of A4 and Letter paper sizes:The choice of paper size is one of the first of any given work to be printed. There are two basic paper size systems in the world: the international A sizes, and the American sizes.

The international Standard paper sizes, called the A series, is based on a golden rectangle, the divine proportion. It is extremely handsome and practical as well. It is adopted by many countries around the world and is based on the German DIN metric standards. The United States uses a basic letter size (8 1/2 x 11") of ugly proportions, and results in complete chaos with an endless amount of paper sizes. It is a by-product of the culture of free enterprise, competition, and waste. Just another example of the misinterpretation of freedom.

A4 (21cm x 29.7cm or 8.25" x 11.67") doesn't jump out as an obviously neat imperial or metric measure, but A4 is part of an official metric standard. It was set in 1975 and is based on a German standard originally from 1922. The key feature of this paper size is that A4 is half the size of A3, which in turn is half the size of A2 and so on up to A0, the largest in the range.

A0 paper is 1 metre squared in area, so why isn't it just 1m x 1m for ease? Because when a 1m x 1m square is halved (the most cost-effective way to produce new sizes from large paper sheets), the new rectangular size no longer has the same shape as the original--any artwork resized during reproduction wouldn't fit the page properly. Not helpful. Halve the paper size again and it becomes square again, so there would be two very different shapes of paper throughout the range. For A-series paper, smaller sizes are exactly half the previous paper size in every case, and importantly they are also exactly the same shape from one size to the next, with a width-to-height ratio of 1:1.41.

This means an A4 page can be scaled up by 41% and all the artwork and text is the perfect width and height to fill an A3 page. Similarly, scaling A4 artwork down to 71% of its original size will see it fit perfectly on an A5 page with no unpleasant stretching, squashing, or redesigning of artwork required (Note that PagePlus and other apps can perform this scaling automatically on output). This "magic number" for paper aspect ratio, 1 to the square root of 2, was first suggested way back in 1786.

-

Jenny O'Dell, "There's No Such Thing as a Free Watch"

Whatever and wherever it is, the entity in question is using dropshipping, a method whereby a company merely forwards the order from the customer to the supplier / manufacturer, who in turn ships the productdirectly to the customer. Mentions of "Made in China" stickers, watches coming in clear plastic bags, the MOJUE box, etc. point to the fact that the company or entity is not actually handling (or branding) the watches before they arrive. In fact, Shopify, which is the platform used by Folsom & Co, Soficoastal, etc., has a blog post specifically advising users on dropshipping items from Aliexpress. In the FAQ section of Folsom & Co.'s site, they state: "like many great American companies, we currently ship from our warehouse in Asia." Such a "warehouse" can only be understood metaphorically, as the process likely involves an entire network of warehouses and distributors, none of which are owned by Folsom & Co.

- Richard Rorty:

It seems to me that the regulative idea that we heirs of the Enlightenment, we Socratists, most frequently use to criticize the conduct of various conversational partners is that of 'needing education in order to outgrow their primitive fear, hatreds, and superstitions' ... It is a concept which I, like most Americans who teach humanities or social science in colleges and universities, invoke when we try to arrange things so that students who enter as bigoted, homophobic, religious fundamentalists will leave college with views more like our own ... The fundamentalist parents of our fundamentalist students think that the entire 'American liberal establishment' is engaged in a conspiracy. The parents have a point. Their point is that we liberal teachers no more feel in a symmetrical communication situation when we talk with bigots than do kindergarten teachers talking with their students ... When we American college teachers encounter religious fundamentalists, we do not consider the possibility of reformulating our own practices of justification so as to give more weight to the authority of the Christian scriptures. Instead, we do our best to convince these students of the benefits of secularization. We assign first-person accounts of growing up homosexual to our homophobic students for the same reasons that German schoolteachers in the postwar period assigned The Diary of Anne Frank... You have to be educated in order to be ... a participant in our conversation ... So we are going to go right on trying to discredit you in the eyes of your children, trying to strip your fundamentalist religious community of dignity, trying to make your views seem silly rather than discussable. We are not so inclusivist as to tolerate intolerance such as yours ... I don't see anything herrschaftsfrei [domination free] about my handling of my fundamentalist students. Rather, I think those students are lucky to find themselves under the benevolent Herrschaft [domination] of people like me, and to have escaped the grip of their frightening, vicious, dangerous parents ... I am just as provincial and contextualist as the Nazi teachers who made their students read Der Sturmer; the only difference is that I serve a better cause.

-

Meaghan Garvey's review of Lust for Life

Since the drastically superior Paradise Edition reissue of Born to Die, Del Rey has neither swayed nor settled. Instead, doubling down on her palette of inky blues and blacks, the singer-songwriter has delivered a trio of dark, dense, radio-agnostic albums that stand wholly apart from any of her pop music peers. If there's anything about Del Rey that's obvious by now, it's that she means it--all of it. Every word, every sigh, every violin swell, the Whitman quotes and JFK fantasies and soft ice cream.

These are titles that may have once implied a campy wink but now appear entirely sincere--songs for figuring out exactly where the fuck we are now. And more than any specific predecessor within the folk canon, they remind me--as does much of Lust for Life--of the paintings of Edward Hopper, a realist who captured a new American landscape, as figurative as it was physical. Hopper painted isolated, voyeuristic scenes of the anxiety and ennui of an increasingly urbanized nation set against the totems of Americana (diners, motels, highway gas stations). His work buzzed with the tension between tradition and progress, the cold power of the new against the sublimity of the natural world. Like Hopper, Del Rey's realism functions doubly as impressionism--literal representation as a means to capture the feeling of life in America.

-

Meaghan Garvey's review of Black Ken

Such is the duality of Lil B: One minute he's a Zen monk, floating high above the trappings of society, and the next he's a red-eyed, surrealist gangster, one funny look away from tearing the club up. And there are occasionally confounding, mostly delightful sonic outliers throughout Black Ken’s back half: "Ride (Hold Up)," like a Clara Rockmore theremin performance interrupted by sepia-toned zaps of Detroit techno, feels at once bound inextricably to history and hovering extra-terrestrially above it. On "Turn Up (Till You Can't)", B applies his T-t-t-totally dude "California Boy" sing-song to Gothenburg-style Balearic pop, and "Zam Bose (In San Jose)" resists explanation entirely. But what ties Black Ken together, and what makes it not just a palatable but completely thrilling listen, like stumbling unwittingly into the best block party ever, is Lil B's production throughout--a wild but controlled stylistic breakthrough.

- "The Soundtrack of Your Life," New Yorker

People at Muzak sometimes speak of a song's "topology," the cultural and temporal associations that it carries with it, like a hidden refrain. When McKelvey works on a program for a client whose customers represent a range of ages--such as Old Navy, whose market extends from infants to adults--she has to accommodate more than one sensibility without offending any. The task is simplified somewhat by the fact that musical eras and genres are not always moored firmly in time. Elvis Presley (who is represented in the Well by fourteen hundred and five tracks) sounds dated to many people today, but teen-agers can listen to Beatles songs from just a few years later without necessarily thinking of them as oldies.

Spanning musical generations can pose technical challenges. If a track that was recorded last year is played immediately after one from the forties, fifties, or sixties, the difference in texture can be jarring. (Anyone who has downloaded music onto an iPod or other digital music player is familiar with the difficulty of maintaining consistency from song to song.) One of the techniques used at Muzak is dynamic range compression, which consists of turning down the loudest parts of a signal and then turning up the entire signal; it's the reason that television commercials often seem louder than the programs they interrupt even though the commercials and the programs are technically limited to the same sound level. In addition, audio architects frequently use tracks as bridges between music from different eras--say, placing a Verve remix of a jazz standard between an Ella Fitzgerald classic and a recent release by Macy Gray. Tracks in the Well are catalogued not only by artist and title but also by producer, label, and date. Recordings from particular studios in particular eras often share a characteristic sound--like wines from particular vineyards and vintages--and some juxtapositions work better than others.

The company that became Muzak was founded by George Owen Squier, a career Army officer, who was born in Dryden, Michigan, in 1865. Squier earned a doctorate in electrical engineering from Johns Hopkins University, in 1893, and he later devised a way to transmit battlefield radio messages clandestinely by using living trees as antennae. In the early nineteen-hundreds, he invented a system of "multiplex telephony and telegraphy by means of electric waves guided by wires"--transmitting multiple radio signals along the outside of electrical, telegraph, and telephone lines. Squier realized that his invention could be used to deliver music, news, and other programming directly to homes and businesses. In 1922 (after helping to establish a predecessor to the Air Force, and running the Army's Signal Corps during the First World War), he sold a license to the North American Company, a public-utility conglomerate, which formed a new subsidiary, Wired Radio, to develop the idea. One of the first test markets was Staten Island. Wired Radio customers there were given a boxy receiver, which looked a little like a gramophone, and the programming fee was added to their monthly electric bill. In 1934, Wired Radio--following the example of Eastman's brilliant coinage, Kodak--changed its name to Muzak. Squier died the same year, of pneumonia.

Several years later, Collis was doing an engineering job for Muzak. He told me, "I walked into a store and understood: this is just like a movie. The company has built a set, and they've hired actors and given them costumes and taught them their lines, and every day they open their doors and say, 'Let's put on a show.' It was retail theatre. And I realized then that Muzak's business wasn't really about selling music. It was about selling emotion--about finding the soundtrack that would make this store or that restaurant feel like something, rather than being just an intellectual proposition."

In 1997, the company adopted Collis's concept--the main element of which he called audio architecture--essentially in its entirety. Muzak went through an exhilarating period of self-examination and redefinition, and moved its headquarters from Seattle to Fort Mill--mainly for economic reasons, but also to sever itself from its stodgy past. In a relatively short time, it transformed itself from a company that sold boring background music into one that was engaged in a far more interesting activity, which it called audio branding.

- "Monumental Lies: Charlottesville's Lee Statue Was Designed to Erase a History We Need to Remember"

As Karen E. Fields and Barbara J. Fields emphasize in Racecraft, the "moonlight-and-magnolias" imagery, the cult of the heroic "Lost Cause of the Confederacy," and the whole Confederate nostalgia industry in general was a product of the New South, not the Old. It was archaizing, but not archaic. "Only in the 1890s did the Confederacy become an emotional symbol," they write.

Across the world, the late 19th century was epicenter for what historian Eric Hobsbawm termed "the invention of tradition." Massive economic and social change in the era of unchained capitalism triggered a revival in various rituals that looked to the past, to give the semblance of continuity and wholeness to a rapidly changing world.

Post-Reconstruction Virginia, however, invented a form of rose-colored romanticism that had a particularly sinister cast.

When President Trump pipes up for the "very fine people" unaccounted for within the weekend's "Unite the Right" protests--those innocent Southern history buffs hidden among the torch-wielding white nationalists--he is dissembling.

But he is also just re-activating the historic function these monuments were constructed to perform the first place: not to communicate a real past, but to concoct a image of white greatness romanticized and sanitized enough to provide a semblance of a popular base for unscrupulous white elites.

- "The Monuments Must Go: An Open Letter From Great, Great Grandsons of Stonewall Jackson"

Confederate monuments like the Jackson statue were never intended as benign symbols. Rather, they were the clearly articulated artwork of white supremacy. Among many examples, we can see this plainly if we look at the dedication of a Confederate statue at the University of North Carolina in which a speaker proclaimed that the confederate soldier "saved the very life of the Anglo-Saxon race in the South." Disturbingly, he went on to recount a tale of performing the "pleasing duty" of "horse whipping" a black woman in front of Federal Soldiers. All over the South, this grotesque message was attached to similar monuments. As importantly, this message is clear to today's avowed white supremacists.

- "Study: Breitbart-led right-wing media ecosystem altered broader media agenda," CJR

Our analysis challenges a simple narrative that the internet as a technology is what fragments public discourse and polarizes opinions, by allowing us to inhabit filter bubbles or just read "the daily me." If technology were the most important driver towards a "post-truth" world, we would expect to see symmetric patterns on the left and the right. Instead, different internal political dynamics in the right and the left led to different patterns in the reception and use of the technology by each wing. While Facebook and Twitter certainly enabled right-wing media to circumvent the gatekeeping power of traditional media, the pattern was not symmetric.

- "How the Radical Right Played the Long Game and Won," NYT

Insight into this conundrum comes from an unlikely source, the life's work of the economist James McGill Buchanan -- who happens to be the subject of a new book, "Democracy in Chains: The Deep History of the Radical Right's Stealth Plan for America," by the historian Nancy MacLean. Buchanan, who was born in 1919 and died in 2013, advanced the field of public choice economics into politics, arguing that all interest groups push for their own agenda rather than the public good. According to this view, governing institutions cannot be trusted, which is why governing should be left to the market.

In the United States, promising and then delivering services and protections for the majority of voters provides a path for politicians to be popularly elected. Buchanan was concerned that this would lead to overinvestment in public services, as the majority would be all too willing to tax the wealthy minority to support these programs. So Buchanan came to a radical conclusion: Majority rule was an economic problem. "Despotism," he declared in his 1975 book "The Limits of Liberty," "may be the only organizational alternative to the political structure that we observe."

-

"I was told that a good trader is a creative trader, and a creative trader is a trader that can find arbitrage opportunities."

- Colin Whitehead, Ex-Trader, Enron, Smartest Guys in the Room

"I want you guys to get a little creative, and come up with a reason to go down."

"Like a forced outage type of thing?"

"Right."

-

"Better Business Through Sci-Fi," New Yorker [link]

One of SciFutures's more prominent contributors is Ken Liu, a Hugo Award-winning author and the translator of the popular Chinese science-fiction novel "The Three-Body Problem." Liu told me that he relishes the level of influence that the firm offers. "As a freelancing gig, it's not much money," he said; typically, stories pay a few hundred dollars. "But you have the chance to shape and impact the development of a technology that matters to you. At a minimum, you know that your story will be read by an executive, somebody who's actually able to decide whether to invest money and develop a product." Liu dismissed the notion that writing science fiction for corporate clients compromised something essential about the genre. "I'm not a big fan of this vision of the artist as some independent, amazing force for good," he said. "Everybody writes in a context for an audience."

The audience that gives SciFutures writers the most freedom to imagine negative outcomes is, not surprisingly, the military. "Those stories can be grittier," Phillips said. "They already do a lot of worst-case-scenario planning." Last year, she and her colleagues produced thirteen stories that were read and discussed in a workshop for forty senior officials from a range of nato member countries. One involves a "smart gun" that gets hacked, nearly causing a massacre of civilians.

-

"When Silicon Valley Took Over Journalism," Franklin Foer [link]

Upworthy plucked videos and graphics from across the web, usually obscure stuff, then methodically injected elements that made them go viral. As psychologists know, humans are comfortable with ignorance, but they hate feeling deprived of information. Upworthy used this insight to pioneer a style of headline that explicitly teased readers, withholding just enough information to titillate them into reading further. For every item posted, Upworthy would write 25 different headlines, test all of them, and determine the most clickable of the bunch. Based on these results, it uncovered syntactical patterns that almost ensured hits. Classic examples: "9 out of 10 Americans Are Completely Wrong About This Mind-Blowing Fact" and "You Won't Believe What Happened Next." These formulas became commonplace on the web, until readers grew wise to them.

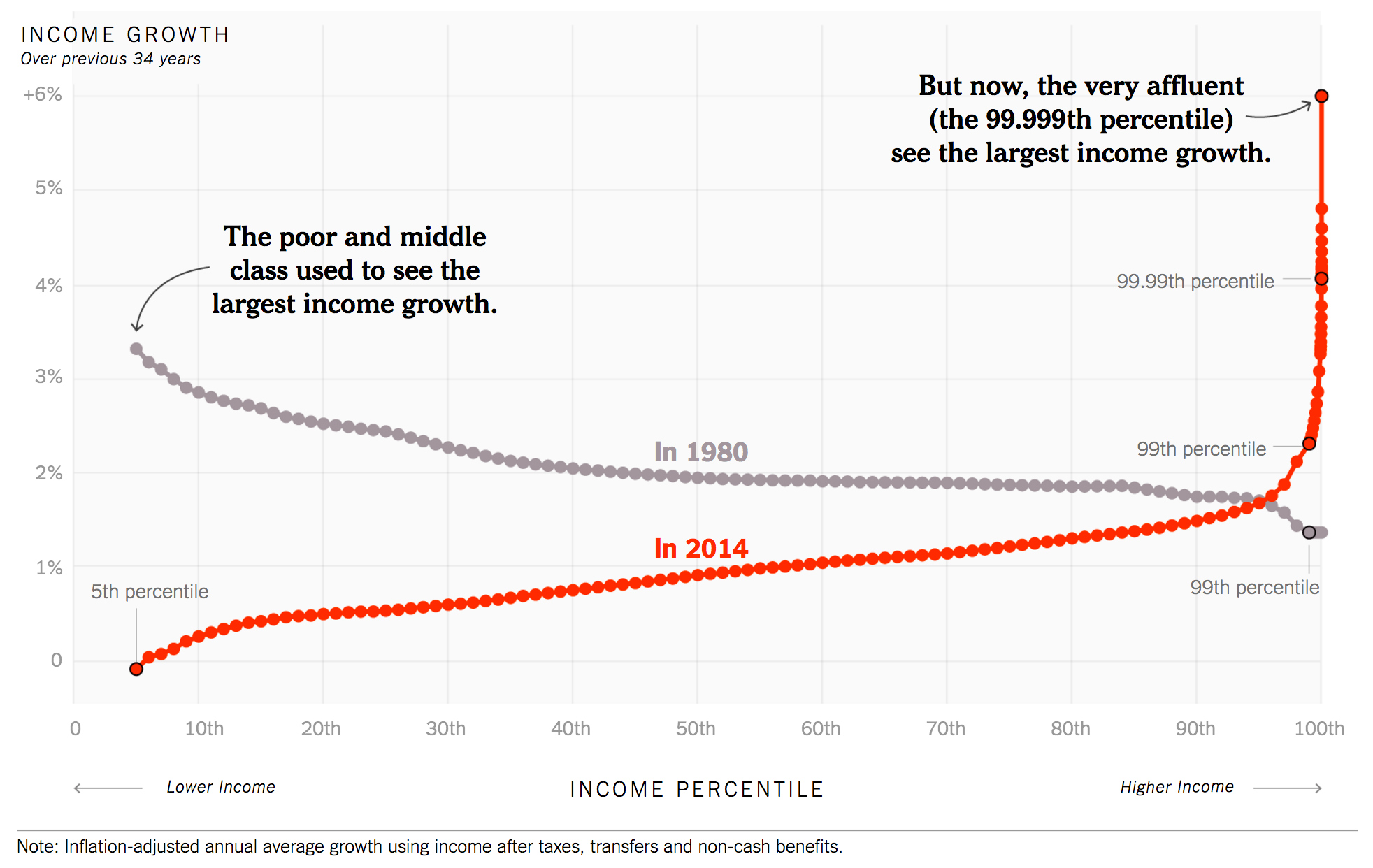

- Our Broken Economy, in One Simple Chart [link]

The message is straightforward. Only a few decades ago, the middle class and the poor weren't just receiving healthy raises. Their take-home pay was rising even more rapidly, in percentage terms, than the pay of the rich.

The post-inflation, after-tax raises that were typical for the middle class during the pre-1980 period--about 2 percent a year--translate into rapid gains in living standards. At that rate, a household's income almost doubles every 34 years. (The economists used 34-year windows to stay consistent with their original chart, which covered 1980 through 2014.)

- Alexandra Bell's "Counternarratives" [link]

- For some in the 1950s, the "creative" spirit was not about artistic expression but productive capacity and world-shaping power.

- Ludwig von Mises in Human Action, 1949:

The Creative Genius Far above the millions that come and pass away tower the pioneers, the men whose deeds and ideas cut out new paths for mankind. For the pioneering genius to create is the essence of life. To live means for him to create.

The activities of these prodigious men cannot be fully subsumed under the praxeological concept of labor. They are not labor because they are for the genius not means, but ends in themselves. He lives in creating and inventing. For him there is no leisure, only intermissions of temporary sterility and frustration. His incentive is not the desire to bring about a result, but the act of producing it. The accomplishment gratifies him neither mediately nor immediately. It does not gratify him mediately because his fellow men at best are unconcerned about it, more often even greet it with taunts, sneers, and persecution. Many a genius could have used his gifts to render his life agreeable and joyful; he did not even consider such a possibility and chose the thorny path without hesitation. The genius wants to accomplish what he considers his mission, even if he knows that he moves toward his own disaster.

Education, whatever benefits it may confer, is transmission of traditional doctrines and valuations; it is by necessity conservative. It produces imitation and routine, not improvement and progress. Innovators and creative geniuses cannot be reared in schools. They are precisely the men who defy what the school has taught them.

In order to succeed in business a man does not need a degree from a school of business administration. These schools train the subalterns for routine jobs. They certainly do not train entrepreneurs. An entrepreneur cannot be trained.

- Ayn Rand, Atlas Shrugged, 1957:

Rand links "productivity" and "creativity" in a similar fashion to the children's art spaces contemporaneously being staged abroad at American expositions.

"Productiveness is your acceptance of morality, your recognition of the fact that you choose to live--that productive work is the process by which man's consciousness controls his existence, a constant process of acquiring knowledge and shaping matter to fit one's purpose, of translating an idea into physical form, of remaking the earth in the image of one's values--that all work is creative work if done by a thinking mind, and no work is creative if done by a blank who repeats in uncritical stupor a routine he has learned from others--that your work is yours to choose, and the choice is as wide as your mind, that nothing more is possible to you and nothing less is human--that to cheat your way into a job bigger than your mind can handle is to become a fear corroded ape on borrowed motions and borrowed time, and to settle down into a job that requires less than your mind's full capacity is to cut your motor and sentence yourself to another kind of motion: decay--that your work is the process of achieving your values, and to lose your ambition for values is to lose your ambition to live--that your body is a machine, but your mind is its driver, and you must drive as far as your mind will take you, with achievement as the goal of your road--that the man who has no purpose is a machine that coasts downhill at the mercy of any boulder to crash in the first chance ditch, that the man who stifles his mind is a stalled machine slowly going to rust, that the man who lets a leader prescribe his course is a wreck being towed to the scrap heap, and the man who makes another man his goal is a hitchhiker no driver should ever pick up--that your work is the purpose of your life, and you must speed past any killer who assumes the right to stop you, that any value you might find outside your work, any other loyalty or love, can be only travelers you choose to share your journey and must be travelers going on their own power in the same direction.

- This strand of libertarian, laissez faire "creativity" resurfaced in Ronald Reagan's 1965 campaign theme, "The Creative Society." From Gerard De Root's Selling Ronald Reagan: The Emergence of a President:

Over time, 'The Speech' morphed into 'The Creative Society,' a vague plan of action. The New Deal would be rolled back, setting the people free to use their creative instincts.

...Reagan took McBirnie's Creative Society idea and explained it in his own words: 'there is present within the the incredibly rich human resources of California the dynamic solution to every old or new problem we face. The task of a state government... is to creatively discover, enlist, and mobilize those human resources.... A position paper elaborated:

"Paternalistic government can solve many problems for the people; but the inexorable price it must extract is power over them, ever increasing power at the cost of ever decreasing individual freedom. The Creative Society idea is that the government shall lead and encourage people to participate in a partnership to solve their own problems, as close to home as possible.

- From Matthew Dallek's The Right Moment: Ronald Reagan's First Victory:

BOTH CANDIDATES were striving toward an agreeable middle that in both spirit and rhetoric would be easier for Reagan to find. Indeed, the middle was rushing toward Reagan, and he had only to convince it that he was not a reactionary Republican but a bold-thinking conservative with fresh ideas and programs. To do this he reemphasized a campaign slogan, suggested to him by W. S. McBirnie, a right-wing preacher, that he had first utilized during the primary. The Creative Society, as McBirnie dubbed it, helped Reagan style himself as a kind of new-look conservative, a forward-looking right-wing Republican with a positive approach to the problems of modern society. The slogan also allowed Reagan to distance himself from the dour anticommunist ideology that he had championed just a few years earlier; and it gave him a way to match the soaring rhetoric emanating from the liberal camp. Though it never received as much attention as John Kennedy's New Frontier or Lyndon Johnson's Great Society, the slogan did lend a coherence to Reagan's campaign, blending his strident appeals for law and order with his emphasis on individual initiative.

By stressing self-help and the efficacy of private enterprise, McBirnie offered Reagan a way to couch conservative attacks on big government in a more positive light and a way to distance himself from the hackneyed ideas and antiquated programs of the American Right. Republicans, McBirnie said, "could unite on this concept. . . . I know in my heart something like this is the very thing needed to transform our campaign into a crusade! . . . It really could have national repercussions if it can be made to work in California."

McBirnie's words were Victory's fuel; the man himself was a liability. The host of a radio program that mixed pulpit preaching with the shrill rhetoric of the anticommunist Right, the reverend was a controversial figure. Though not a member of the John Birch Society, he incarnated the kind of conservative activist that had sent chills down the spines of Reagan's handlers. He was a danger in other ways as well. In June 1959, McBirnie had resigned as pastor of the Trinity Baptist Church in San Antonio, Texas, after confessing that he had slept with the wife of a former parishioner. "Satan has taken advantage of the Church's weakest link, me," McBirnie explained in his resignation speech, "but I can't even blame Satan. I can only blame myself." Following his admission, McBirnie divorced his wife of nineteen years and, a few days later, married his longtime lover.

At least his Creative Society slogan was a winner. A Reagan campaign memo suggested the benefits of adopting McBirnie's program; the Creative Society, Reagan's aides argued, would convey to voters the message that:

"There [was] no area of human need and no commonly shared problem which cannot be tackled and solved. The big question is not whether our national and state human problems shall be solved but how. Republicans have sometimes been caricatured as people who want to turn back the clock, to return to the status quo. But, on the contrary, our eyes are turned to the future for that is where we are going to live. . . . If the socialistic type of government is called the "Great Society," which it is mistakenly called, let us propose another, a better idea, a happy and constructive alternative: THE CREATIVE SOCIETY. The premise of the "Creative Society" is that there is already or potentially present within the incredibly rich human resources of our state, the full solution to every problem which we face. . . . The "Creative Society" seeks to go beyond bleeding heart or bumbling "liberalism". . . [and achieve] the ultimate in personal liberty consistent with law and order."

- Whiting Williams was a member of the Board for Individual Awards

- Finding aid for Anthony Luchek, economist with the War Production Board, at Penn State [link]

- Prince "Blast From the Past 4.0" discussions [1 and 2]

- 2016 National Occupational Employment and Wage Estimates [link]

- A timeline of women's fashion from 1784-1970 [via]

- Inger L. Stole - Advertising at War: Business, Consumers, and Government in the 1940s

- Eisenhower's "Advanced Study Group"

- Mentioned in Osborn's Your Creative Mind

- C. Richard Nelson - The Life and Work of General Andrew J. Goodpaster: Best Practices in National Security Affairs [link]

- "Dream sessions" unbound by everyday constraints

- National Archives Catalog page for National Inventors' Council [link]

- Administrative History of the National Inventors Council [link]

- Marcia Holmes - "Performing Proficiency: Applied Experimental Psychology and the Human Engineering of Air Defense, 1940-1965"

- Hidden Persuaders blog on Cold War-era psyhcology [link]

- Meg Jacobs, Princeton University, good resources on late 20th C. business [link]

- Walter A. Harris, Developer Of IBM Suggestion System [link]

- Walter Harris, President of NASS, presents plaque to Assistant Secretary of Army, Hugh M Milton III [link]

- Handbook of Research on Employee Voice: Elgar Original Reference [link]

- Jonah Lehrer, "Groupthink," New Yorker [link]

- Comment on Osborn's personal papers [link]

I examined materials in Box 12, Patentable Ideas, circa 1949-1951, thinking they would be Osborn's inventions, but discovered that was not the case. After publication of Your Creative Power in 1948, Osborn was inundated with requests from readers to help them get their ideas produced. It became such a volume of letters that he used a form letter to reply. He acknowledged the difficulty in sending products to a company and sympathized with the writers. Part of the letter explained that he would return their items, but could not comment or review them without a waiver of indemnity. Some of the ideas, names for products, for example, might be things his advertising firm had already conceived.

He was concerned that there was no formal process for people to use for this purpose. He and a friend from England, P. Clavell Blount, wrote to each other frequently trying to get a clearing house for ideas or a National Association of Suggestion Systems underway in either country.

- Brief bit of Charles Clark bio [link]

Charles H. Clark of Sebring was one of the first four creativity experts inducted into the Creative Problem Solving Institute's Hall of Fame at the leadership banquet last month at the Mark Adams Hotel, Buffalo, N.Y.

The CPSI is the major international conference sponsored by the Creative Education Foundation in Hadley, Mass., an authority on creativity, innovation and problem-solving. Typically, it attracts about 900 individuals from all 50 states and 30 countries..

Clark is an educational innovator, author of the book "Brainstorming," and past president of Yankee Ingenuity Programs. Recognized as a creative pioneer, he originated numerous methods to trigger creative thinking in churches, associations, government and business..

In 1985, the Odyssey of the Mind Organization gave him its annual Lipper Award for his contributions toward developing creativity. The following year, he was among the first to receive the Creative Education Foundation's Service and Commitment Award for more than three decades of volunteer service. .

In 1990, the foundation bestowed its highest honor, its Distinguished Leader Award, for profound contributions to the creativity movement worldwide as a distinguished researcher, author and teacher..

A Harvard graduate who earned his master's degree in education from the University of Pennsylvania, he served as senior education and training consultant for the BF Goodrich Institute for Personnel Development at Kent State University; was president of Idea Laboratory, Pittsburgh; and was vice president of the Center for Independent Action. Clark and his wife, Marilyn, live at Copeland Oaks Retirement Complex. .

- Finding Aid for "Alex Osborn Creative Studies Collection" at Buffalo State [link]

- Infrastructures of Creativity conference [link]

- Michael Wallis - The Best Land Under Heaven: The Donner Party in the Age of Manifest Destiny

- Yuval Noah Harari - Sapiens: A Brief History of Humankind

- Ursula K. Le Guin on technology:

Its technology is how a society copes with physical reality: how people get and keep and cook food, how they clothe themselves, what their power sources are (animal? human? water? wind? electricity? other?) what they build with and what they build, their medicine - and so on and on. Perhaps very ethereal people aren't interested in these mundane, bodily matters, but I'm fascinated by them, and I think most of my readers are too.

Technology is the active human interface with the material world.

But the word is consistently misused to mean only the enormously complex and specialised technologies of the past few decades, supported by massive exploitation both of natural and human resources.

This is not an acceptable use of the word.